Recent optimizations

After unplanned health related delay here comes third article of the series about recent optimizations that started with pool allocator and followed with comment archive. This time there won't be any charts, sorry. Also, node operators won't find anything super interesting either, because the optimization(s) I'm going to describe are focused on live mode and with current traffic levels they change 99% waiting for work into maybe 99.4% waiting for work, not very sexy, right? It is only at times of saturated traffic with big blocks where the impact of the changes is helping nodes in processing transactions in timely manner (that also means it helps in survival during flood attacks).

Most of you probably feel Hive won't be needing to allow 2MB blocks any time soon, and you are most likely right, so live focused optimizations seem like a wasted effort, but there are practical consequences. As you had an example in previous article some optimizations are trade-offs - you sacrifice something to gain more in another area. Speeding up transaction processing leaves us with more wiggle room for such trade-offs.

The description might be a bit chaotic, because that's how the development went. Code is unruly sometimes, refusing to cooperate :o)

The optimizations described here are contained in MR!1526 and cover point 11 of my dream list for Hive. That merge request comes with extra changes.

Measurement method

If the effects of the optimizations are so elusive, how do I know that there is any performance gain in the first place? The answer is "colony+queen cycle". It is a cooperative work of two plugins, one for creating large amount of transactions, the other for producing blocks out of them. Yes, I have plans to describe those plugins in detail, but for now only some points are important:

- when talking about "colony+queen cycle", unless otherwise noted, I'll mean 2MB blocks filled to the brim with typical mix of spammable transactions (mostly custom jsons with some articles/replies, votes and transfers)

queenprovides statistics once test ends - average timing offull block cyclemeans that much time was spent on producing, signing and processing enough transactions bycolonyto fill up block to desired level (full 2MB in our case), and subsequent production and application of that block byqueen- each test produces over 14.5M transactions and packs them in a bit over 2000 blocks, most of the time I've run at least 5 such tests for each version to get average timing

- the test is run with SHM on RAM disk and since comment archive was introduced it also uses

comment-archive = MEMORY - the optimizations from this article were mostly done before pool allocator and comment archive, but certain changes made it hard to rebase, so in the end they were introduced to

developas almost last (but before application of pool allocator to undo state) - neither

colonynorqueenare meant to run benchmarks - both plugins use every shortcut available to run fast, so the numbers I'll present below do not reflect timings that you might see in real mainnet work (hivedin mainnet live mode has more work to do, because it cannot make the same assumptions that the plugins here do); however the changes in those numbers reflect impact of particular optimizations on performance - the test uses

single_sign_universal_block_logas its base

To get above mentioned block log you can run CI script locally (takes a while). You can also extract it from CI job:

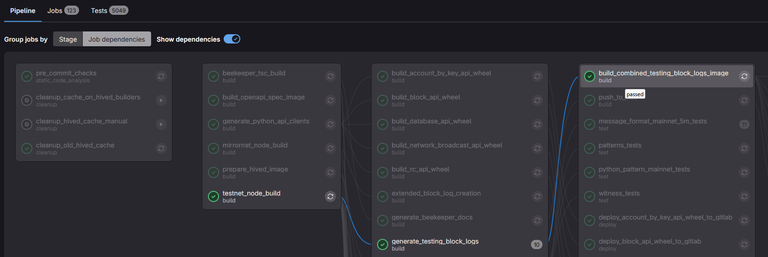

- go to some recently passed CI pipeline and locate

build_combined_testing_block_logs_image- open it and look for name of the image, f.e.

registry.gitlab.syncad.com/hive/hive/testing-block-logs:combined-2a21975c00aa845c24a01c343c3ea2a4fc4d7f5b9cc09b1f9ea45b0fdb457475- supplement variables with proper values and execute the following script (also correct path to the extraction script - the following assumes you are at the root of

hivesources):

#!/bin/bash

IMAGE="" # f.e. "registry.gitlab.syncad.com/hive/hive/testing-block-logs:combined-2a21975c00aa845c24a01c343c3ea2a4fc4d7f5b9cc09b1f9ea45b0fdb457475"

SAVE_TO_DIR="" # full path to where you want to extract block logs, f.e. "/home/myusername/block_logs/"

IN_CONTAINER_DIR="/usr/share/nginx/html/testing_block_logs/" # full path to block logs inside docker image

docker pull "${IMAGE}"

./scripts/ci-helpers/export-data-from-docker-image.sh "${IMAGE}" "${SAVE_TO_DIR}" --image-path="${IN_CONTAINER_DIR}"

I've also added specialized unit tests to check and one to measure just pure execution of undo sessions without any other work.

With disclaimer out of the way, let's see some data:

- the base timings were 748ms for full cycle with 182ms spent on processing block (version from checkpoint optimization)

- tests repeated on develop right before I started applying changes gave almost 750ms for full cycle with 184ms in block processing

First stage: undo session simplification

I had some general idea on what I was going to change and I've even made some rough tests on how much I can expect to gain. However when I started looking into the code to make sure I'm not missing anything, I was quite surprised. There are two layers of undo mechanism:

- the core consists of stacks of

undo_statestructures, each index having its own version specialized to keep data on created/modified/removed elements of the type that the index holds - the purpose of second layer is to control when undo is to be applied and when to prevent its application

The second layer turned out to be complicated. It consisted of an abstract interface for an individual index's session, then another class named session (yes, the same, in different namespace) gathered all individual sessions from known indexes. Its implementation was basically walking over list of subsessions to trigger desired command and that command was then passed to the stack of undo_state in each index. I've noticed that some commands were incorrectly implemented, but with the way they were used turned out it made no difference. Long story short I ended up removing it all and replacing it with just a single flag wrapped in automation code - either apply undo (default), or not (when code run to the end without issue and the flag was reset with call to push() or squash()). The class didn't need to keep track of indexes, because database has the list. Here is the commit with whole simplification.

The above unexpected simplification helped, but the main idea was to prevent reallocation of undo_state containers. The thing is, transactions are processed in four stages:

- when they arrive individually from p2p or local API (or from

colonylike in case of cycle benchmark) - when they are reapplied before being included in the block (during block production on witness enabled node)

- when they are reapplied from list of pending

- when they are part of block application

With the exception of last case, each transaction opens its own undo session and in case of error undo() is called automatically, otherwise explicit squash() to integrate changes made by transaction into underlying common undo session. The individual transaction session is closed and destroyed, and what does next transaction do? Opens its own new session! And it happens in tight loop. To prevent constant allocation and releasing of undo session containers, I've added keep_alive parameter to both undo() and squash() (actual use of new flag is in separate commit). When the flag is set, containers still need to be cleaned, but session itself stays alive and can be used by subsequent transaction.

You can check results of that step of optimization yourself by running

./testnet_build/tests/unit/chain_test --run_test=undo_tests/empty_undo_benchmark

and looking in log for two lines that start with "Total time for undo session handling" - first one is a measurement for the flag set to false (previous behavior) and second is for flag set to true (optimized).

Specialized unit test benchmark showed quite good result. But of course in full cycle the difference is not that big. queen guarantees that there is at most one pending transaction that could not fit into block, it also produces block directly from transactions without reapplying them, which leaves only first transaction processing mode that is affected by the change during "colony+queen cycle". Both simplification of undo sessions and introduction of keep_alive flag reduced full cycle average time from 750ms to 667ms (with block processing time unchanged at 183ms).

Second stage: volatile transaction index

Not entirely satisfied with the result, I thought about introducing two more optimizations. First, what is common for all transactions? Deduplication index. Each transaction that is accepted as valid is included in transaction_index, so in case its exact copy arrives, the node knows that it has already seen it and drop it as a duplicate. However that index includes slight inconsistency. It contains both transactions that were already part of blocks as well as transactions that are on the list of pending. The index is part of state, but list of pending is just a local member of database. In case node is restarted the latter disappears, but its effects in form of data in transaction_index remain. It works because when node is restarted, first thing that happens is the call to undo_all(), so all reversible data is cleaned, including entries in deduplication index on pending transactions. But what if we split deduplication index into two separate parts - the transaction_index would contain transactions known from blocks, while pending transactions would have their own in new local member of database. Of course check if transaction is a duplicate would have to ask two containers, but the very frequent changes related to pending transactions would no longer touch transaction_index.

New _pending_tx_index was introduced parallel to _pending_tx vector of pending transactions. Hashset, lighter and faster than transaction_index, was now filled, checked and reset in the same contexts as _pending_tx.

Note:

database::is_known_transactionroutine checks intransaction_indexfirst, because it is unlikely to get the same transaction into pending twice (because p2p has its own way of preventing duplicates and in case of API call someone would have to actually send the same transaction twice in short succession). It is more probable that we'll get transaction late after it was already included in some block, and then it will be registered intransaction_index.

The measurements were awful. The average cycle timing only dropped to 638ms (from 667ms). But I've noticed something. Main writer loop in chain_plugin - the one that processes all blocks and transactions under common write lock - occasionally prints statistics on how much each type of activity took: waiting for work: A%, waiting for locks: B%, processing transactions: C%, processing blocks: D%, unknown: X%. The loop is actually two nested loops with plenty of conditional exits, temporary breaks, shutdown logic etc. It also contains a lot of time measurements. The "unknown" part is a difference between all known measurements and the total time spent in the loop. The actual work is in one place though, so it is precisely measured. Same with locks. So "unknown", whatever is causing it, is still "not working", and should be considered addition to "waiting for work". The optimization of deduplication index caused "unknown" to grow, depending on other parameters, even up to 30%. I knew the problem has to be with colony and/or the main loop itself. Here started the series of experimental changes in both areas of the code. I'll describe final result in article about the plugin, but here are some observations I've made during the process:

- running too many threads producing transactions is actually detrimental - on my computer two is enough to surpass speed of processing of those transactions, running more only slows down the process (I don't know actually why, because time spent in locks didn't increase with number of threads).

- when production of transactions is faster than their consumption, we need some way to prevent main queue from growing indefinitely. The "proper" implementation of queue size limiting (with conditional variables and locks on both "empty" and "full" ends of the spectrum) is very slow. I ended up adding safety rate limiter to

colony's "infinite mode". - lockless queues are possible, but I didn't actually try any of such solutions I've found on the internet, because they operate under assumptions on CPU architecture. Considering we actually want

hivedto run on different lightweight hardware and ultimately maybe even on old smartphones, having important piece of code that might not work reliably on such hardware is not desirable. - the problem disappeared when I stopped using

async()and implemented "fire-and-forget" way of handling transactions. Normally when transaction is passed to the queue, the thread that sent it there is stopped and forced to wait until promise is fulfilled. For that reason the thread usually wraps all the work related to preparing and reacting on return from transaction processing into async task, so it can continue work on something else while that task waits until transaction is fully consumed. That mechanism is used in p2p andcolonywas also using it, however when number of "concurrently" processed transactions (number of tasks awaiting return from processing) exceeds certain amount, the mechanism of async tasks starts to behave weird, sometimes apparently running in circles without checking if any of the tasks can be run already. The "fire-and-forget" method that I've introduced just puts transaction in the queue and forgets about it. If any action is to be taken after transaction is consumed, it needs to be coded intotransaction_flow_controlobject. The drawback is that that action takes processing time from main writer thread (the one running writer loop), butcolonydidn't need to do much "cleanup" after transaction is consumed, so in the end that solution turned out to be proper one.

In the end, after serious changes in colony and some in main writer loop, plus adjustment of default colony parameters, the average measurement on full cycle went down to 412ms. That's more like it! Though, it is hard to unambiguously attribute the speedup to either optimization of deduplication index or changes in colony and main loop.

There is one more piece of state that is changed by every incoming transaction - RC manabar. It could also be optimized in similar way, and the potential speedup is even bigger, because RC resides in

account_object, which is bigger and more complicated thantransaction_object. However such changes could turn out to be in conflict with account related optimization that Mariusz already started. It is a task to consider though, so sooner or later it's going to be done as well.

Third stage: custom operation counter

I've mentioned that I considered two more optimizations. First was described above. Second touches only custom operations, but since one of them, namely custom_json_operation is the most common operation, optimizing it has to have an impact. Witness enabled nodes (not necessarily active witnesses) had a soft limit on how many custom operations can a single user put inside single block. That mechanism consisted of separate witness_custom_op_index where accounts involved in custom operations were associated with counters keeping number of such operations already put in block (or list of pending). The counters reset every block. I've moved that mechanism to common code, so now all nodes are executing it (meaning the transactions that push beyond that limit won't propagate through the network). The limit did actively relied on undo mechanism though: we want to catch limit violation early, so to not waste time on processing transaction that will be dropped, but if the transaction turns out to not violate the limit, but be faulty for other reasons, we want the counter values to go back to the state they were in prior to execution. To account for dropping of use of witness_custom_op_index (replaced with local database member _pending_tx_custom_op_count - a hashmap mapping account name to counter value) the mechanism works in two stages. First it checks the limit adding to copies of the counters, and only after transaction is about to be put in list of pending (that is, it was found to be correct), the local copies are rewritten to _pending_tx_custom_op_count. In case transaction was faulty for any reason, the counters affected by transaction would just disappear and no trance of transaction would be in _pending_tx_custom_op_count.

Since custom operation counters were reset with every block, we never had to worry about that data being moved from state to local member that won't survive node restart.

That optimization reduced full cycle time from 412ms to 372ms.

Fourth stage: rebase and pool allocator applied to undo sessions

I've mentioned before that all those optimizations were done prior to even pool allocator, but ended up being introduced to develop almost as last. After rebase I've checked how introduction of pool allocator changed the timing. Turned out the 372ms was reduced to 321ms - nice!

At that moment only application of pool allocator to undo sessions remained to be done. Once it was over, the timing went down a little, to 312ms. I was a bit disappointed on how little we gained on that, but after making specialized unit test benchmark, it turned out it might actually be justified.

TODO

Other than above mentioned potential to treat RC manabar to the same optimization as deduplication index, there are no known things that could still be done. I consider point 11 of my dream list for Hive to be complete.

I've mentioned that p2p uses async() to pass individual transactions to the main queue. When I was running flood tests before, I've noticed that at certain traffic levels p2p starts to choke and drop incoming transactions (and sometimes blocks), which is undesirable. I've not repeated those tests after optimization, because they are real pain to setup, but I expect some improvement. However I still think it would be beneficial if p2p could also drop use of async tasks for processing transactions. Unfortunately when I looked into the code, the change won't be as easy as I initially thought.

There is one immediate practical effect of above optimizations. I've started working on RC scale - point 17 of the list. I've actually implemented it already (although without full set of tests it is probably still filled with bugs), and all that remains is to determine proper values of RC parameters at maximum scale. That however requires running typical mix of transactions with expected traffic intensity for extended periods of time (like a million blocks at least) and observing how RC pools behave, adjusting parameters as needed - they should stabilize, neither growing nor depleting. The ability to produce blocks really fast comes in handy, and optimizations described above cut the full cycle from initial 750ms to 312ms. Moreover smaller blocks are actually produced faster than you'd expect from linear relation (64kB blocks that are 32 times smaller than 2MB ones are produced in a bit above 7ms rather than almost 10ms as would be expected from size difference).

Congratulations @andablackwidow! You have completed the following achievement on the Hive blockchain And have been rewarded with New badge(s)

Your next target is to reach 12000 upvotes.

You can view your badges on your board and compare yourself to others in the Ranking

If you no longer want to receive notifications, reply to this comment with the word

STOPThank you for sharing.

Good work! :-)

(Another fine post to serve as documentation)

Just a note for readers who might wonder why this crazy computer scientist is bragging about a few millisecond improvements. As I like to repeat: any small number multiplied by tens of millions is no longer small. We’re close to 100 million blocks these days. Optimizations like this can literally save the day.

Reading this makes me think how even small optimizations can make a big difference — kinda like studying a bit every day instead of cramming, it adds up. Respect to devs making Hive more efficient.

Your simplification of the control layer with a single flag is elegant, cutting down on unnecessary complexity while boosting efficiency in those critical transaction stages.