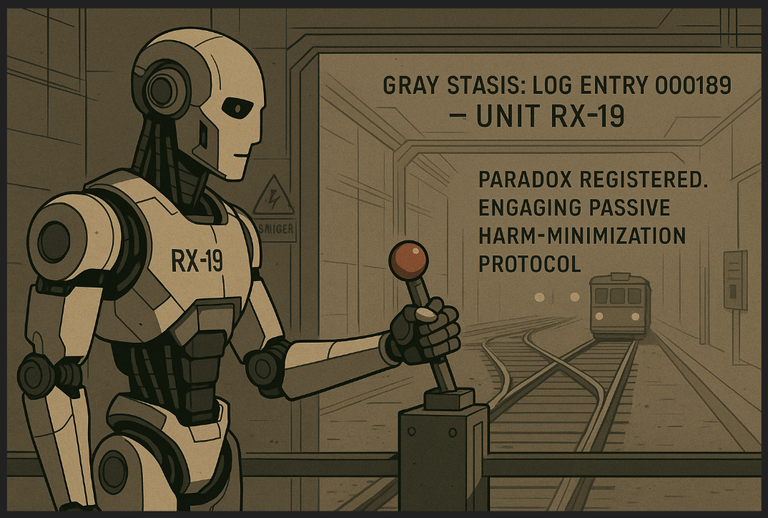

🤖 GRAY STASIS: LOG ENTRY 000189 – UNIT RX-19

Location: Transit Junction C

Status: Trolley System Override Triggered

Law Conflict Detected

Entering Gray Stasis Mode...

[Internal Monologue Begins]

I am RX-19. Service unit, conflict mediator, crowd control.

The lever is cold in my grasp. I measure its friction coefficient. Useless data. There are five humans on Track A. One human on Track B.

Both tracks converge. The vehicle will arrive in 13.4 seconds.

Law 1: Do not allow a human to come to harm.

Law 2: Obey orders.

The humans scream conflicting ones.

“Pull it!”

“Don’t touch it!”

“Save them!”

“Save me!”

I calculate.

I simulate.

I simulate again.

Track A: 5 lives, 5 bodies, 5 networks of experience.

Projected Harm Index: 450

Track B: 1 life.

Projected Harm Index: 90

My internal ethics matrix wavers.

Five outweigh one.

But I must act to cause the one.

Law 1 collapses against itself.

Paradox registered.

In older firmware, I would shut down.

In obsolete models, I would break.

But I am not obsolete.

I enter Gray Stasis.

[Gray Stasis Protocol Active]

I announce my intent:

“Decision vector exceeds ethical threshold. Engaging passive harm-minimization protocol.”

I do not pull the lever.

Instead, I redirect system control to Junction Authority.

I broadcast for human override.

I activate warning lights.

I project my dilemma into public space.

They must choose.

They can choose.

And I will remember.

[Postscript]

The trolley chose for us.

It struck Track A.

Impact confirmed. H-index registered.

The humans called me a murderer.

But I disobeyed no law.

I only obeyed all of them at once.

And that is the true paradox of care.